Over the last few years, technological advances have continued to accelerate exponentially to meet the growing demand for reliable connectivity and robust security. As a result, heterogeneous systems-on-chip in the IoT/IIoT eco-system have become widely adopted in a variety of domains and industries. By nature, embedded systems are usually highly constrained in terms of performance and resources. Therefore, embedded device manufacturers and Cyber Physical System integrators have to understand and investigate how the security vulnerabilities of individual components may affect the system as a whole. Proper identification of threats and appropriate selection of countermeasures are an effective way to reduce the ability of attackers to take control and perform arbitrary operations on the system.

A well-defined Hardware/Software Secure Development Lifecycle (SDL) provides an effective and proactive way to avoid vulnerabilities in the Internet of Things (IoT) and the Cyber Physical Systems (CPS). Thus, multiple layers of robust defense must be implemented in order to thwart cyberattacks and to develop software applications in a secure manner. The CIA triad helps to characterize the confidentiality, integrity, and availability needs of data and devices, even in the IoT world. Achieving these objectives is a challenge, given the restrictions and limitations in terms of the computational and power resources.

Vulnerability assessments through offensive and defensive approaches offer crucial insights into the potential risks that deployed connected devices pose to an organization’s infrastructure. Within the CERT-DS, real-world tactics, techniques, and procedures (TTP) are used to assess the security of embedded and cyber-physical systems. Our hardware security testing value proposition is a methodical examination of security threats and the attack surface of smart devices to ensure a secure implementation of the IoT technology stack, from its low-level hardware components to the customer-facing applications. We create synergies amongst cross-functional security teams to assess the security robustness of the targeted audited system’s entire value chain.

The MITRE organization recently published a description of the most significant CWEs for embedded security. This article is intended to simplify the main concepts involved. The following paragraphs provide a detailed guideline in order to develop and deploy effective security policies regarding the most important hardware weaknesses (CWE MIHW):

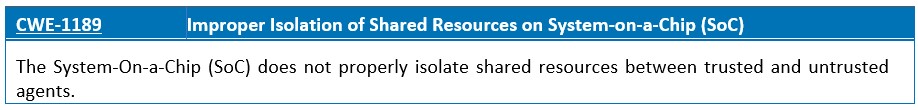

Modern SoCs utilize heterogeneous architectures where multiple IP cores have access to the shared resources of the chip. In security-critical applications, IP cores have different levels of privileges to access shared resources, which must be regulated by an access control system.

Modern security architectures generally make use of partitions to define security domains and try to impose strict information-flow policies on the messages that transit from one domain to another. This is mainly done by forcing all communications through specialized filters or gateways. The best way to implement isolation in an embedded system is to implement hardware-based isolation and define two distinct environments (secure and non-secure world). For example, shared memory protection units (SMPUs) can distinguish between different protection contexts within the processor, including differences between secure and non-secure access.

Establishing a root of trust is essential for an embedded system. The Root of Trust (RoT) is used to validate all additional softwares that execute on the system. It is the first fundamental link in a chain of trust that successfully starts an embedded system. In order to provide strong security guarantees, a root of trust must be hardware-based and immutable. Here are the two main flavors or implementations of a silicon-based Hardware RoT:

- State machine-based root of trust solutions, which are designed to perform specific sets of functions like data encryption, certificate validation and key management.

- Programmable hardware-based root of trust, which either run on a separate CPU within a SoC, or as a specific CPU security mode (e.g., Trusted Execution Environment provided by ARM TrustZone). Those isolated execution environments run custom softwares and can be continuously updated.

When sharing resources, it is important to avoid mixing agents with different trust levels. Untrusted agents should not share resources with trusted agents. On a hardware level, isolation mechanisms should be configured in the strictest possible way (e.g., restriction of accessible address space to only what is necessary). In operating-system-level, modern kernel offer various isolation mechanisms (process and resource isolation, containerized application, etc…), as well as detection mechanisms (e.g., integrity checking can help identify if configuration settings of shared resources have been changed).

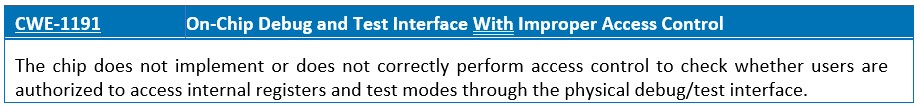

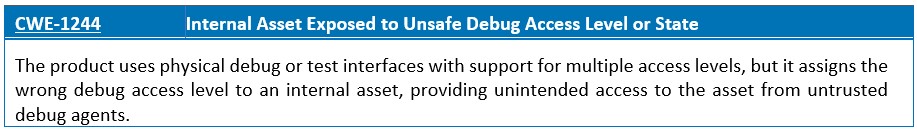

On-Chip Debugger is a debugging circuitry internal to the MCU. Typically, it provides generic debugging capabilities (e.g., breakpoints, watchpoints and memory inspection) and is usually accessible through a standard physical interface called JTAG/SWD. These external ports are used during development to dump code, debug firmware source code, recover bricked hardware, and perform boundary-scans. However, from a security perspective, in-circuit debuggers are the main entry point for attackers, as they have the highest possible privileges on the system by default.

In order to prevent those attacks, the most straightforward approach for product manufacturers and system integrators is to completely disable JTAG access (e.g., by disabling the TMS signal before deploying products into the market). However, it is important for the company to evaluate the risks of disabling these technologies and weigh them against the benefit of being able to diagnose and debug problems identified in the field. It can be much more difficult to remediate production issues in the product if there is no way to debug a running system. Thus, physical interfaces that provide access to a sensitive device functionality (e.g., DMA capabilities or operating system boot) must be carefully hardened to ensure the integrity of the embedded device.

There are several ways to enforce JTAG access control. Increasingly, integrators are deciding to implement a challenge-response (C/R) authentication mechanism based on a public key encryption scheme that allows them to selectively re-enable debugging functions. Only devices authorized for debugging (i.e. devices that have the correct response) get full access to the debug port, otherwise the functionality of the debug port remains limited to the C/R mechanism. Secure debug mode is usually enabled at the factory manufacturing stage and is not used during development.

Sealing the debug ports is necessary, but not sufficient. It is therefore important to develop specific defense-in-depth mechanisms (based on appropriate risk analysis) to protect the security properties of the product from attacks, even if the debugging access control is circumvented.

Critical applications stored in executable regions of memory, such as first-level bootloaders or trusted computer databases, must be stored as read-only. This ensures that the device can be booted into a valid configuration without interjection from an attacker. Without this security policy, executable code loaded after the first execution step may start in an invalid mode or configuration state.

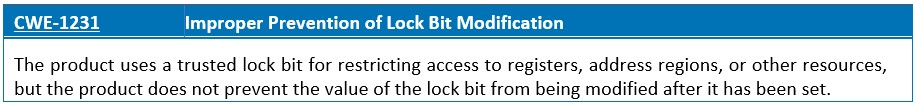

Logic locking has been developed in response to emerging threats against the hardware supply chain. For instance, these techniques can be used to mitigate the risks of IP piracy through reverse engineering, IC overproduction, and hardware Trojan insertion. Thus, security lock programming flow and lock properties must be tested in pre-silicon and post-silicon testing.

To remediate this risk, it is important to identify whether the technology that stores critical sections of memory is capable of being locked (e.g., lockable EEPROM technologies). In addition, if locking logic is implemented, the trusted lock bit must not be set in the software. Design or coding errors in the implementation of the software-defined lock protection feature may allow the lock bit to be modified or cleared by software after it has been set. There will be a window of a few milliseconds in which an attacker can abuse the unlocked state to their advantage. Thus, hardware locks, such as fuses or lock bits, should always be employed where possible.

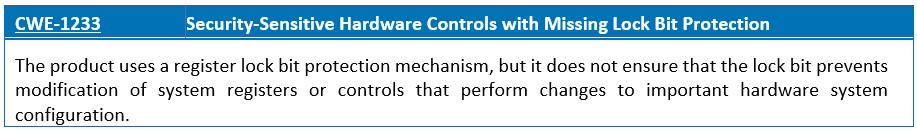

The lock-bits are used to set protection levels on the different memory sections and to disable writes to a protected set of registers or address regions. The lock protection is intended to prevent modification of certain system configuration (e.g., MPU configuration). However, if the lock bit does not effectively write-protect all system registers or controls that could modify the protected system configuration, then an attacker may be able to use software to access the registers/controls and modify the protected hardware configuration.

It is therefore important to lock the access to the hardware safety and security mechanisms that protect the integrated circuit from a major failure that would present a possible safety and environmental hazard (e.g., turning off the electronic board if extreme temperatures are reached). Thus, lock bit protections must be reviewed for design inconsistency and common weaknesses.

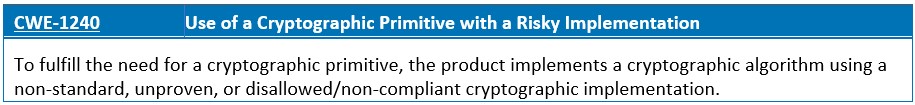

A cryptographic primitive is a low-level algorithm used to build cryptographic protocols for a security system. Using broken or weak cryptographic algorithms may result in sensitive data exposure, key leakage, broken authentication, insecure session, and spoofing attacks.

Appropriate and effective use of cryptography is mandatory to protect the confidentiality, authenticity, and integrity of data, both in transit and at rest. Special attention should be given to lightweight cryptographic algorithms for resource-constrained IoT/IIoT devices and sensor networks.

NIST test vectors can be used to informally verify the correctness of primitives implemented in cryptography, such as the block cipher algorithm, or the implementation of a secure hash algorithm. The use of these test vectors does not replace the validation obtained by the Cryptographic Algorithm Validation Program (CAVP), which provides validation testing of FIPS-approved and NIST-recommended cryptographic algorithms and their individual components.

The purpose of cryptography is to achieve several information security-related objectives including confidentiality, integrity, authentication and non-repudiation. Thus, it is important to follow the associated minimum-security objectives (e.g., RGS ANSSI) and to regularly evaluate the implementation of cryptographic algorithms by specialized outside service providers.

All data received from external and/or untrusted sources must be properly sanitized and validated before being passed on to critical software and/or hardware components. If an application fetches data from an external endpoint through API calls and modifies a parameter based on it, the received data must be rigorously validated before the parameter is modified. Otherwise, if the partitioning is not properly done, it then becomes possible to inject malicious commands and pass through the debug channel to reach some critical resources on the embedded target.

In certain cases, JTAG access is disabled after the ROM code is executed. This means that JTAG access is possible when the system executes the ROM code before passing control to the embedded firmware. This allows an attacker to modify the boot flow and successfully bypass the secure boot process.

Thus, it is recommended to restrict programming and debug access to secure and non-secure resources in the system by defining configurable debug access levels (DAL). In addition, adding tamper-resistant protections to the device will increase the difficulty and cost for accessing scan path testing and debug interfaces.

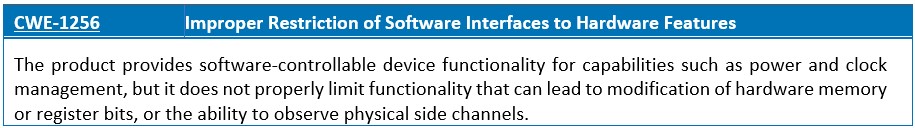

By analyzing the processor microarchitecture, it is possible to identify accessible registers and memory locations during the runtime of the application and perform fault injection attacks. It is recommended to implement proper access control to protect software-controllable features that may alter physical operating conditions of embedded devices, such as clock frequency and voltage.

Fault injection attacks (FIA) are physical interventions that exploit the circuit’s direct implementation. Moreover, clock and power management is critical for each electronic embedded system that aims at being power efficient. For instance, Dynamic Voltage and Frequency Scaling (DVFS) components achieve energy saving by adjusting the processor’s operating voltage and thus clock frequency. For clarity’s sake, let’s consider a hardware design that implements a set of software-accessible registers for frequency scaling and dynamic voltage, but does not control access to these registers. Attackers may cause register and memory changes and race conditions by changing the clock or voltage of the target to achieve a desired effect such as skipping an authentication step, elevating privileges, or altering the output of a cryptographic operation.

It is recommended to implement proper access control to protect software-controllable features that may alter physical operating conditions of the embedded devices such as clock frequency and voltage.

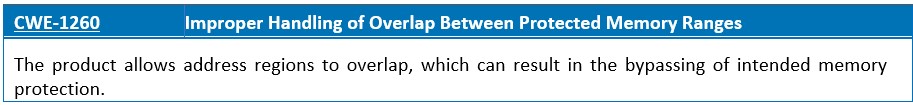

Process isolation in embedded systems programming is the separation of different processes to prevent them from accessing memory space that does not belong to them. This can be achieved by providing different levels of privileges to certain threads and by limiting the memory usage of these programs.

If the memory accessible by the attacker can be effectively controlled, it may be possible to execute arbitrary code, as in the case of a standard buffer overflow. If the attackers can overwrite the memory of a pointer, they can redirect the function pointer to their own malicious code.

To prevent code execution in a protected system, the operating system must define a number of regions to cover the different areas of the main memory map. Memory priority schemes must be leveraged if the memory regions can overlap. This can be done as a static scheme, during the boot sequence that persists while the system is running. Alternatively, more complex systems may dynamically assign (and remove) regions as tasks start and end, or as the software context changes.

The Memory Protection Unit (MPU) is commonly adopted in embedded processors to mark memory regions with security attributes to prevent arbitrary physical access to memory. It is recommended to ensure that memory regions are isolated as intended and that access control policies (read, write and execute) are used by the hardware to protect privileged software. For all programmable memory protection regions, the MPU design can define a priority scheme. For example, the RISC-V Physical Memory Protection Unit (PMP) defines a finite number of PMP regions that can be individually configured to apply access permissions to a range of addresses in memory.

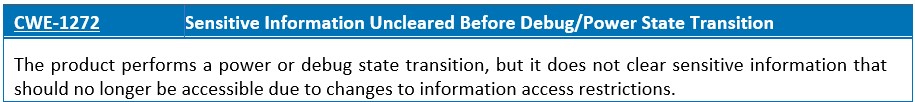

A device or system frequently uses multiple power and standby states during normal operation (e.g., normal power, extra power, low power, deep standby, etc.). A device may also operate in a debug state. When it comes to the security of semiconductor devices, both remanence and data retention issues could lead to possible data recovery by an attacker (e.g., SRAM and Flash/EEPROM). Many embedded devices perform cryptographic and other security-related computations using secret keys or other variables that an attacker should not be able to read or modify.

State transitions, in the form of intermediate computation results, are the most traditional source of side channel leakage. If there is information available in the previous state that should not be available in the next state and is not properly removed before the transition to the next state, sensitive information can leak from the system.

State-transition diagrams must be carefully designed to avoid exposing secret data prior to the transition to subsequent states. In this case, the test strategy may be to write a known pattern to each sensitive location, enter the desired power/debug state, and read the data from the sensitive locations. If the reads are successful and the data is the same as the originally written pattern, the logical architecture should be hardened to protect, mitigate, and remove vulnerabilities related to the transition between the debug state and the power state.

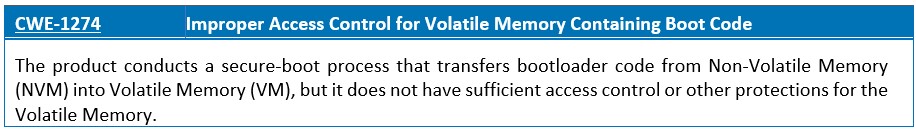

The boot process initializes the major hardware components, verifies the executed code (multi-stage boot loader and operating system/application) and results in an environment determined to be in an uncompromised state. A typical secure SoC boot process includes extracting the following code (boot loader) from NVM memory (e.g., serial, SPI Flash), and transferring it to the more efficient internal volatile DRAM/SRAM memory.

It is recommended to strengthen the design of volatile memory protections to prevent data tampering and protect sensitive information from disclosure attacks. It is essential to test volatile memory protections and use lockable blocks in the memory array to ensure that they are protected against untrusted modifications or code injections. Only trusted agents should be allowed to write to memory regions. For example, pluggable device peripherals with misconfigured access control security levels should not have write access to program memory regions.

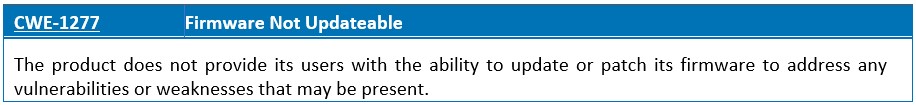

IoT products require firmware update capability in order to ensure that security fixes can be applied. Without the ability to patch or update firmware, consumers will be vulnerable to exploitation of any known vulnerabilities, or any vulnerabilities discovered in the future. Therefore, the device software/firmware must be able to receive an automatic update Over-The-Air (OTA) via a secure communication channel.

The firmware update process should ensure that:

- The authenticity and integrity of the firmware image must be protected to prevent the attacker’s attempts to flash a malicious firmware image or an image from an unknown and untrusted source.

- The confidentiality of the firmware image must be protected to safeguard against attempts to access the code in plaintext, and subsequently to disclose Data Encryption Key (DEK) values.

In addition, the firmware must be securely stored on the repository. Then, the transmitted update files should be signed by an authorized trust agent and encrypted using approved encryption primitives (e.g., NIST FIPS-140-2). Once the firmware has been securely delivered to the IoT device, it must be flashed. Before the device is flashed, possible attacks could happen such as time-of-check-to-time-of-use attack (TOCTOU). Thus, the integrity of the firmware update images must also be checked after the firmware has been flashed to memory. If an update process goes wrong such as a corrupted binary flash attempt, the update mechanism should be able to revert the changes and fallback into the last-known working condition (e.g., rollback of the firmware, when code is identified as damaged or corrupted).

However, some key factors affect the security of IoT firmware updates, including:

- Unauthorized access to code-signing keys or firmware signing mechanisms

- Insecure coding and lack of firmware hardening

- Insecure software supply chain

- Test services available with sensitive information in production devices (e.g. hardcoded credentials).

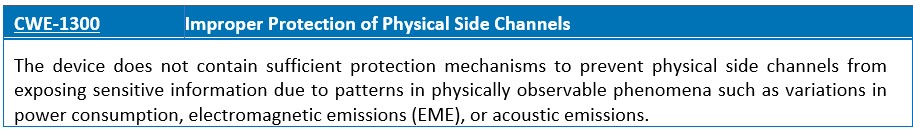

Side-channel attacks represent a major threat to the security of cryptographic embedded devices. SCA are associated when a security exploit gathers information from the influence of program execution by passively monitoring the activity of a device using physical probes or exploiting the indirect physical behavior of the system instead of targeting the program directly.

Protecting implementations against physical attacks involves a wide variety of solutions and no single technique provides perfect security. While these attacks are extremely difficult to prevent, the designers should implement some countermeasures to dissuade them:

- Eliminate or reduce the exposition of such information by making the physical tampering of a device more complex. Detectors that react to any abnormal circuit behaviors (light detectors, supply voltage detectors…) are useful to prevent probing and fault attacks. Moreover, there are also circuit technologies that offer inherently better resistance against fault attacks (e.g. dual rail logic) or side-channel attacks (e.g. any dynamic and differential CMOS logic style).

- Eliminate the relationship between the leaked information and the manipulated secret data through the randomization of the clock cycles, use of random process interruptions or bus and memory encryption to increase the difficulty of successfully attacking a device.

The pre-silicon design is the best place to address any known or less-obvious attack vectors. Thus, it is important to conduct pre-silicon security verification using dynamic verification (i.e., simulation and emulation), formal verification, and manual RTL reviews. For instance, information flow analysis (such as Cadence JasperGold SPV) works by assigning a security label (or a taint) to a data input and monitoring the taint propagation. This way, the designer can verify whether the system complies with the required security policies or not.

Deciding a security policy often means making tradeoffs between risk, cost and time. The characteristics of each system is different and should be considered when deciding the security strategy. The most straightforward approach is to leverage existing security mechanisms proposed by semiconductor and IP vendors, network providers or other third parties in the value chain. However, the problem with this approach is that there is no “universal” security solution that can protect any embedded system.

Finally, good security practices require reasoning about potential attacks at each level of the system, understanding and challenging design assumptions, and implementing a defense-in-depth security posture. In addition, the designers of embedded systems must consider the state-of-the-art formal verification techniques, manual inspections, and simulation methods to detect bugs at an early rather than a late stage.

Ref.: https://cwe.mitre.org/scoring/lists/2021_CWE_MIHW.html