Unleash the performance of your HPC applications

For many years, the regular improvement of processors brought regular performance gain without any pain. Today, the increased number of compute cores, in CPUs and in accelerators or co-processors, requires a true optimization effort to get maximum performance.

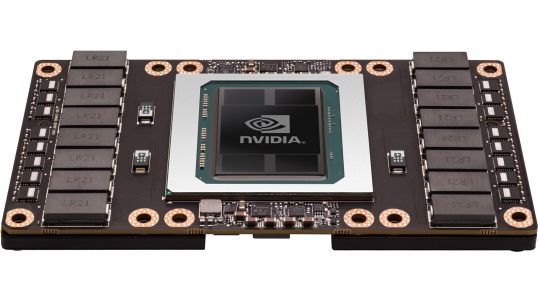

Atos Center for Excellence in Performance Programming (CEPP), operated in partnership with Intel and NVIDIA, helps you get optimal performance and maximum energy efficiency for your applications in the context of manycore technologies. The CEPP’s experts can advise you and help you analyze, optimize and port your codes.

This includes for example:

- Proof of Concepts (POCs) to demonstrate performance gains,

- workshops that give you the opportunity to exchange with experts and get started with the porting, optimization and acceleration of your simulations,

- application and solution benchmarks,

- tailored training,

- access to specific compute resources.

- Fast Start program to ensure your applications make the most of your Atos supercomputers from day one (porting, optimizing and configuring your applications well ahead of system delivery).

HPC is everywhere

HPC is everywhere, in our medicines, our investments, our cellphones, in the films we go to see at the cinema and the equipment of our favorite athletes, the cars we drive and the petrol that they run on. It makes our world a safer place, with ever more accurate and precise weather, climate and seismic forecasts. All sectors, in industry and in the academic and scientific community, rely on HPC. Discover here some examples of what Atos supercomputers can do for their users – and maybe how your research or your business can benefit from HPC.

An introduction

Deep learning is the capacity for a computer to recognize specific representations (images, texts, videos, sounds) after being shown many examples of these representations. For example, after being introduced to thousands of cat pictures, the program “discovers” by itself what are the specific features of a cat and can then distinguish a cat from a dog or any other picture.

This learning technology, based on artificial neural networks, has revolutionized Artificial Intelligence (AI) in the last five years. So much so that today, hundreds of millions of people rely on services powered by deep learning for speech or face recognition, real-time voice translation or video discovery. It is used for example by Siri, Cortana and Google Now.

Data Science Services

The High Performance Data Analytics competency centre aims to assist customers starting their cognitive projects, with recommendations about innovative methods and algorithms for their use cases, recommendations regarding application performance expectation, and to anticipate technological hardware trends.

Competency centre staff includes High Performance Computing engineers, data scientists such as deep-learning and NLP experts.

Customers can leverage the existing competency centre infrastructures, based on proven reference architectures, to test their applications while getting advice on how to make the most out of them. The competency center supports customers during their proof-of-concept stages, relying on trainings , webinars, workshops and dedicated services.

Atos and Deep Learning

The Atos team is actively working with technological partner NVIDIA®, to provide the Deep Learning expertise, the hardware and software solutions, and the services you need throughout the development stage and the production stage of your Deep Learning project.

Service Operation

As a matter of efficiency and operation costs, Atos experts ensure you remain compliant with your SLAs.

Atos teams can run your operations until the skills transfer and handover to your team. Our service operation consists in remote monitoring coupled with event management, trouble ticketing coupled with incident management and user request management, change management with impact analysis and on-going optimization service including problem management, knowledge management and documentation.

Data Center and Energy Efficiency

There are many ways to optimize energy consumption, from energy-efficient hardware, with Direct Liquid Cooling technology for example, to optimized cooling system, and including also scheduler configuration, or the setting of energy KPIs to make your applications energy-aware.

Atos’ range of expertise in data center efficiency includes customer site analysis, logistics requirements, standard compliance, data center integration, and maintenance and support.

For example, to optimize data center design, Atos can simulate an entire computing room, including air flows for optimum cooling, and modeled cable routes and rack locations.

Training

As a standard part of the installation process, Atos provides on-site, tailored, workshop training for the system administration team. Atos can also deliver on request a range of advanced training programs for HPC users and HPC developers, which will help your teams make the most of their Atos supercomputer and run operations in an optimal way.

Proposed training courses include application development and optimization, how to port applications and what compilation options to select, how to improve performance, for example how to improve job submissions, or how to choose message queues. Some training course are dedicated to operations and system administration, OS management and tools (batch manager, file system management, network management, monitoring…). A specific data management course focuses on data assessment.

–

Supercomputing and meteo

Without supercomputers, weather forecasting as we know it today would not be possible. And as the computing power available to meteorological agencies increases, weather forecasts improve in many ways.

With supercomputers, climate researchers are able to perform climate simulations at a higher resolution, to include additional processes in earth system models, or to reduce uncertainties in climate projections.

Relying on fine-grain weather forecasts to anticipate severe phenomena

Between 1992, when Météo-France invested in their first supercomputer, and today, the compute capacity increased by a factor of 500.000 – and Météo-France expects to keep the same trend in the future. Weather forecasting agencies worldwide need to:

- issue forecasts every hour

- use a finer mesh size for finer and more reliable predictions

- enable the prediction, exact location and time of severe weather phenomena

These objectives require increased model resolution and the incorporation of a greater quantity of data and observations in the forecasting process. This means more computing resources and the capacity to handle massive data efficiently.

space

Simulating climate globally

Atos is a partner of the Centre of Excellence in Simulation of Weather and Climate in Europe (ESiWACE), a Horizon 2020 Project on HPC ecosystem in Europe leveraging the European Network for Earth System modelling and the world leading European Centre for Medium-Range Weather Forecasts. The main goal of ESiWACE is to substantially improve efficiency and productivity of numerical weather and climate simulation on high-performance computing platforms by supporting the end-to-end workflow of global Earth system modelling in an HPC environment. Besides, with regard to the upcoming exascale era, ESiWACE will establish demonstrator simulations, which will be run at highest affordable resolutions (target 1km). This will yield insights into the computability of configurations that will be sufficient to address key scientific challenges in weather and climate prediction.

HPC to face the challenge of climate change

Valérie Masson-Delmotte is a French climate scientist and Research Director at the French Alternative Energies and Atomic Energy Commission, where she works in the Climate and Environment Sciences Laboratory (LSCE). She explains here how High Performance Simulation can help face the challenges of climate change.

CNAG: Unravelling the mysteries of DNA

Ivo G. Gut, Director of the Spanish Genomics Center (CNAG) explains how they analyze more than ten human genomes everyday, with the help of Atos supercomputers. Their ultimate purpose is to turn the sequences into valuable insight, so as to improve people’s health and quality of life.

–

Pirbright Institute: advancing viral research and animal health

When a deadly virus emerges, scientists must respond rapidly to characterize the virus, track its spread, and stop it from devastating livestock and possibly infecting humans. As a global leader in this work, The Pirbright Institute in the UK needs flexible high-performance computing (HPC) resources that can handle a wide variety of workloads.

Pirbright deployed an Atos supercomputer. With a unified environment running its diverse applications, Pirbright

enhances scientific productivity and helps policymakers respond effectively when a viral outbreak threatens.

–

Boost the translation of Omics to the clinic environment

To face the demands of an ageing population, the current healthcare system needs to evolve towards a sustainable model focused on patient wellness. This revolution will only be possible by transferring research breakthroughs – from genomic research in particular – to everyday healthcare.

–

GENyO expands analytic capacity for precision medicine

The Center for Genomics and Oncological Research (GENyO) needed more robust infrastructure to support the rising demand for bioinformatics analysis and large-scale projects. GENyO selected a Bull supercomputer from Atos. Now the center is increasing its scientific productivity on high-profile projects that will help contribute to new precision diagnostic capabilities, pharmaceutical breakthroughs, and more efficient public health services for Spain’s Andalusian Region.

–

–

CIPF: Leveraging genomics for better diagnosis and treatment

Genome sequencing and analysis are complex tasks that demand powerful analytics platforms. The compute time needed for sequencing has been reduced considerably in recent years, making it possible to drastically increase the amount of genomic data collected on large study populations. This opens the way to a new genomic-based healthcare service, leveraging in-depth and comprehensive genomic analyses for a predictive and personalized medicine. The challenge is to achieve:

- Better and predictive diagnosis

- More efficient treatments

- Customized dosing

To implement such a promising project, sequence analysis must be available on an industrial scale, and complex analytics must be supported. This requires computational power on an unprecedented scale.